The dictionary defines the word network as “a group or system of interconnected people or things.” Similarly, in the computer world, the term network means two or more connected computers that can share resources such as data and applications, office machines, an Internet connection, or some combination of these,

The Local Area Network

Just as the name implies, a local area network (LAN) is usually restricted to spanning a particular geographic location such as an office building, a single department within a corporate office, or even a home office.

Back in the day, you couldn’t put more than 30 workstations on a LAN, and you had to cope with strict limitations on how far those machines could actually be from each other. Because of technological advances, all that’s changed now, and we’re not nearly as restricted in regard to both a LAN’s size and the distance a LAN can span. Even so, it’s still best to split a big LAN into smaller logical zones known as workgroups to make administration easier.

In a typical business environment, it’s a good idea to arrange your LAN’s workgroups along department divisions; for instance, you would create a workgroup for Accounting, another one for Sales, and maybe another for Marketing—you get the idea

Common computer Network Components

There are a lot of different machines, devices, and media that make up our networks. Let’s talk about three of the most common:

■ Workstations

■ Servers

■ Hosts

Workstations

Workstations are often seriously powerful computers that run more than one central processing unit (CPU) and whose resources are available to other users on the network to access when needed. Workstations are often employed as systems that end users use on a daily basis. Don’t confuse workstations with client machines, which can be workstations but not always. People often use the terms workstation and client interchangeably. In colloquial terms, this isn’t a big deal; we all do it. But technically speaking, they are different. A client machine is any device on the network that can ask for access to resources like a printer or other hosts from a server or powerful workstation.

Servers

Servers are also powerful computers. They get their name because they truly are “at the service” of the network and run specialized software known as the network operating system to maintain and control the network.

In a good design that optimizes the network’s performance, servers are highly specialized and are there to handle one important labor-intensive job. This is not to say that a single server can’t do many jobs, but more often than not, you’ll get better performance if you dedicate a server to a single task. Here’s a list of common dedicated servers:

- File Server : Stores and dispenses fi les

- Mail Server :The network’s post office; handles email functions

- Print Server : Manages printers on the network

- Web Server : Manages web-based activities by running Hypertext Transfer Protocol (HTTP) for storing web content and accessing web pages

- Fax Server : The “memo maker” that sends and receives paperless faxes over the network

- Application Server : Manages network applications

- Telephony Server : Handles the call center and call routing and can be thought of as a sophisticated network answering machine

- Proxy Server : Handles tasks in the place of other machines on the network, particularly an Internet connection.

Hosts

This can be kind of confusing because when people refer to hosts, they really can be referring to almost any type of networking devices—including workstations and servers. But if you dig a bit deeper, you’ll find that usually this term comes up when people are talking about resources and jobs that have to do with Transmission Control Protocol/Internet Protocol (TCP/IP). The scope of possible machines and devices is so broad because, in TCP/ IP-speak, host means any network device with an IP address. Yes, you’ll hear IT professionals throw this term around pretty loosely; for the Network+ exam, stick to the definition being network devices, including workstations and servers, with IP addresses.

Here’s a bit of background: The name host harks back to the Jurassic period of networking when those dinosaurs known as mainframes were the only intelligent devices able to roam the network. These were called hosts whether they had TCP/IP functionality or not. In that bygone age, everything else in the network-scape was referred to as dumb terminals because only mainframes—hosts—were given IP addresses. Another fossilized term from way back then is gateways, which was used to talk about any Layer 3 machines like routers. We still use these terms today, but they’ve evolved a bit to refer to the many intelligent devices populating our present-day networks, each of which has an IP address. This is exactly the reason you hear host used so broadly.

Wide Area Network

There are legions of people who, if asked to define a wide area network (WAN), just couldn’t do it. Yet most of them use the big dog of all WANs—the Internet—every day With that in mind, you can imagine that WAN networks are what we use to span large geographic areas and truly go the distance. Like the Internet, WANs usually employ both routers and public links, so that’s generally the criteria used to define them.

Here’s a list of some of the important ways that WANs are different from LANs:

- WANs usually need a router port or ports.

- WANs span larger geographic areas and/or can link disparate locations.

- WANs are usually slower.

- We can choose when and how long we connect to a WAN. A LAN is all or nothing— our workstation is connected to it either permanently or not at all, although most of us have dedicated WAN links now.

- WANs can utilize either private or public data transport media such as phone lines.

We get the word Internet from the term internetwork . An internetwork is a type of LAN and/or WAN that connects a bunch of networks, or intranets . In an internetwork, hosts still use hardware addresses to communicate with other hosts on the LAN. However, they use logical addresses (IP addresses) to communicate with hosts on a different LAN (other side of the router).

And routers are the devices that make this possible. Each connection into a router is a different logical network. Figure 1.5 demonstrates how routers are employed to create an internetwork and how they enable our LANs to access WAN resources.

The Internet is a prime example of what’s known as a distributed WAN—an internetwork that’s made up of a lot of interconnected computers located in a lot of different places. There’s another kind of WAN, referred to as centralized, that’s composed of a main, centrally located computer or location that remote computers and devices can connect to.

Network Architecture

We’ve developed networking as a way to share resources and information, and how that’s achieved directly maps to the particular architecture of the network operating system software. There are two main network types you need to know about: peer-to-peer and client-server. And by the way, it’s really tough to tell the difference just by looking at a diagram or even by checking out live video of the network humming along. But the differences between peer-to-peer and client-server architectures are pretty major. They’re not just physical; they’re logical differences. You’ll see what I mean in a bit.

Peer-to-Peer Networks

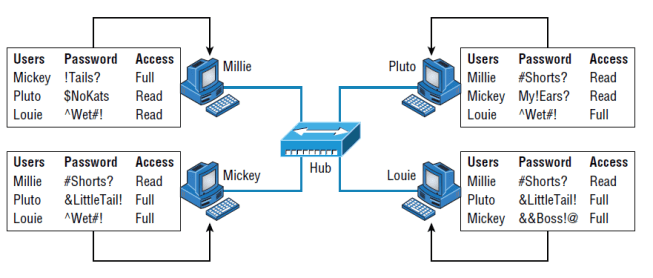

Computers connected together in peer-to-peer networks do not have any central, or special, authority—they’re all peers, meaning that when it comes to authority, they’re all equals. The authority to perform a security check for proper access rights lies with the computer that has the desired resource being requested from it.

It also means that the computers coexisting in a peer-to-peer network can be client machines that access resources and server machines and provide those resources to other computers. This actually works pretty well as long as there isn’t a huge number of users on the network, if each user backs things up locally, and if your network doesn’t require much security.

If your network is running Windows, Mac, or Unix in a local LAN workgroup, you have a peer-to-peer network. Keep in mind that peer-to-peer networks definitely present security-oriented challenges; for instance, just backing up company data can get pretty sketchy!

Since it should be clear by now that peer-to-peer networks aren’t all sunshine, backing up all your critical data may be tough, but it’s vital! Haven’t all of us forgotten where we’ve put an important file? And then there’s that glaring security issue to tangle with. Because security is not centrally governed, each and every user has to remember and maintain a list of users and passwords on each and every machine. Worse, some of those all-important passwords for the same users change on different machines—even for accessing different resources.

Client-Server Networks

Client-server networks are pretty much the polar opposite of peer-to-peer networks because in them, a single server uses a network operating system for managing the whole network. Here’s how it works: A client machine’s request for a resource goes to the main server, which responds by handling security and directing the client to the desired resource.

This happens instead of the request going directly to the machine with the desired resource, and it has some serious advantages. First, because the network is much better organized and doesn’t depend on users remembering where needed resources are, it’s a whole lot easier to find the files you need because everything is stored in one spot—on that special server. Your security also gets a lot tighter because all usernames and passwords are on that specific server, which is never ever used as a workstation. You even gain scalability—client server networks can have legions of workstations on them. And surprisingly, with all those demands, the network’s performance is actually optimized—nice!

Many of today’s networks are hopefully a healthy blend of peer-to-peer and clientserver architectures, with carefully specified servers that permit the simultaneous sharing of resources from devices running workstation operating systems. Even though the supporting machines can’t handle as many inbound connections at a time, they still run the server service reasonably well. And if this type of mixed environment is designed well, most

networks benefit greatly by having the capacity to take advantage of the positive aspects of both worlds.